What Is an MCP Server? Architecting Argo CD with AI-Driven Control Planes

Sharad Regoti

Over the past few years, LLMs have evolved from standalone systems limited to their training data to accessing real-time information using RAG and interacting with applications like email or Slack through function calling.

However, the adoption of these techniques has been limited. Developers face challenges around interoperability between different tools and models, the statelessness of function calls, and the absence of a standard for how applications interact with LLMs.

This is where the Model Context Protocol (MCP) comes in. MCP is an open standard developed by Anthropic that standardizes how applications provide context to LLMs.

Within a few months of its launch, MCP has been adopted across various industries, with developers building MCP servers to connect LLMs to multiple systems. One such integration is the Argo CD MCP Server by Akuity.

In this post, we will explore what an MCP server is, how it works, and how the Argo CD MCP Server enables new use cases by integrating AI and infrastructure tooling into modern development workflows.

What is the Model Context Protocol (MCP) Server?

The Model Context Protocol (MCP) is an open standard developed by Anthropic that standardizes how applications provide context to LLMs. Think of MCP like a USB-C port for AI applications. Just as USB-C provides a standardized way to connect your devices to various accessories, MCP provides a standardized way to connect AI models to different data sources and tools.

It allows users to trigger actions and access data from these external systems directly through their AI assistant, eliminating the need for custom-built integrations for each tool or AI.

Why Should Developers Care About MCP?

As described above, MCP solves the problem of connecting AI agents to external tools and data sources in a standardized way.

This standardization improves developer workflow by:

Enabling developers to create and share reusable tools that can be easily integrated into different AI applications.

Reduce the need for custom code required for application integration.

Make it easier to build AI agents and scale AI systems that interact with the real world.

Let’s understand in detail what problems MCP solves and how it differs from other approaches.

What Problems Does MCP Solve?

Before MCP, integrating AI assistants with external systems required custom code for each service. This approach works without issues but leads to a fragmented and difficult-to-manage ecosystem.

For example, OpenAI offers GPT actions for customized GPTs. Integrating a Custom GPT with a third-party service like Gmail only works within OpenAI's ecosystem. Other models, like Gemini or Claude, require separate integrations.

Now this lack of standardization has spiralled into the following problems:

"N x M" Integration Problem:

As the number of AI models (M) and external tools or data sources (N) grows, integration becomes more complex. Every combination requires its custom logic.

Maintenance and Updates: Updating integrations or switching between different tool providers is challenging without a standardized protocol.

Scaling Challenges: As AI systems become more complex and need to interact with more external resources, the complexity of managing integrations can become overwhelming.

MCP addresses this fragmented integration by providing a protocol for all AI assistants and tool interactions. With this, developers only need to implement MCP to make their application available to multiple AI models, and AI platforms only need to support MCP to connect with an ecosystem of tools. This creates a robust and scalable architecture.

How Is MCP Different From Other AI Integration Methods?

Approaches like Retrieval-Augmented Generation (RAG), Function Calling, ChatGPT Plugins, and Frameworks like LangChain were actively used to integrate LLMs with external systems and data sources.

Unlike the above approaches, MCP is a protocol that governs client and server communication. It does not replace the above methods. These methods are still used during the implementation of MCP servers when required.

In essence, MCP aims to be the HTTP equivalent for AI agents, providing a standardized communication layer.

How the Model Context Protocol Enables AI Interactions

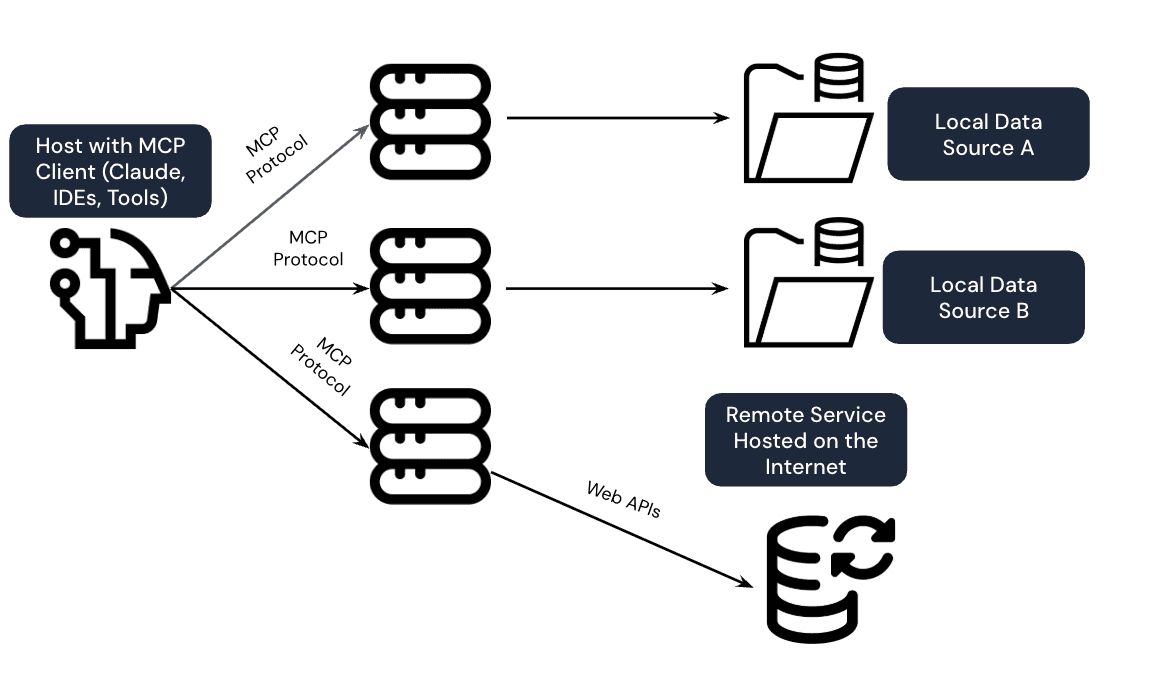

In the MCP protocol, the interaction between AI assistants and external tools is done through a client-server architecture, where a host application can connect to multiple servers:

The Three Core Components

The MCP architecture consists of three main components:

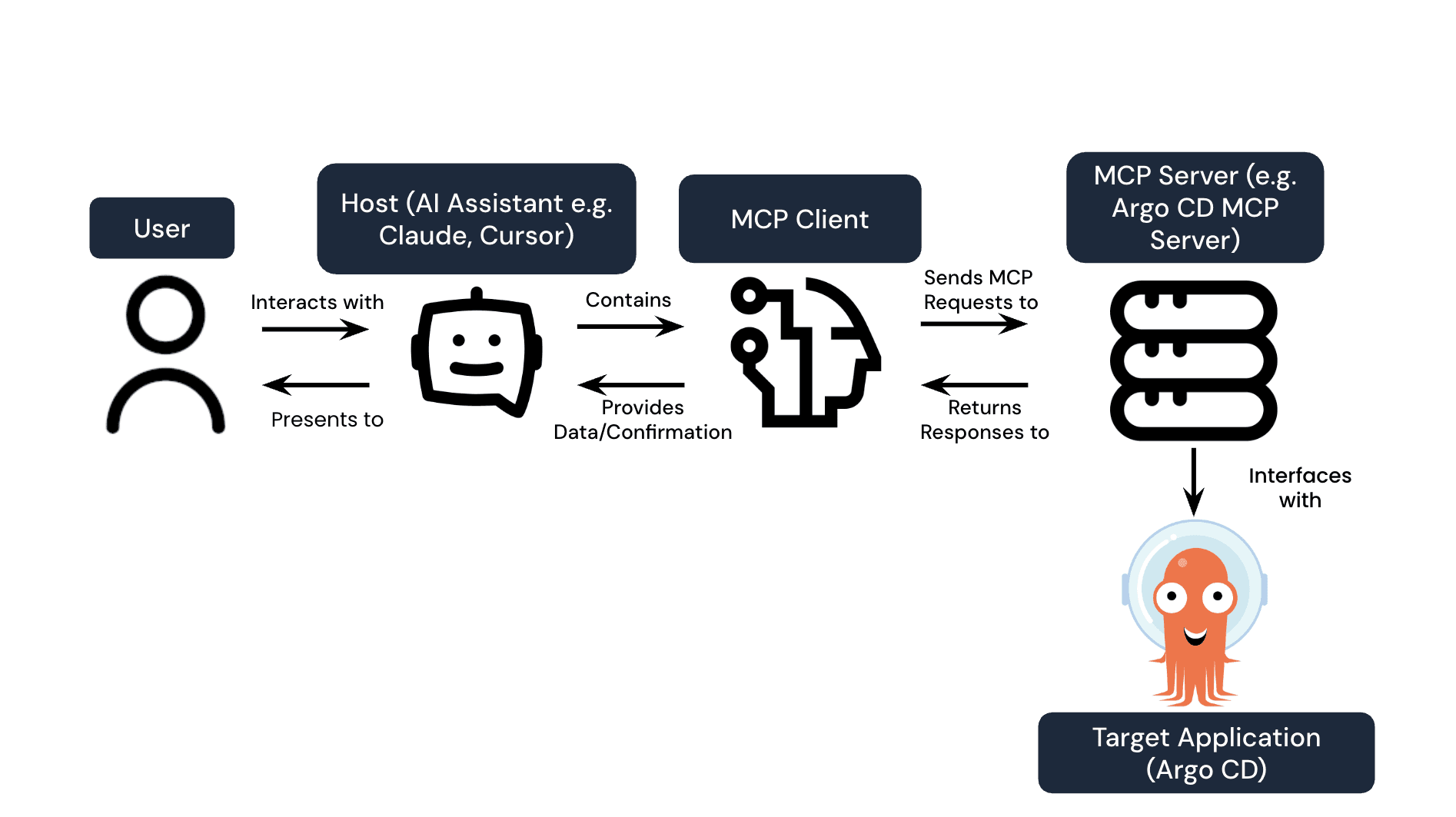

Host: This is the AI tool or assistant that the user interacts with, such as Claude, Cursor, or Zed. The host initiates requests to the MCP client.

Client: The MCP client resides within the host application. It acts as a connector, translating the AI's intentions into MCP requests and forwarding them to the appropriate MCP server.

Server: The MCP server is an interface built for the target application (e.g., Argo CD). It defines the tools (actions) and resources (data) the AI assistant can access or trigger. The server processes requests from the client and returns responses.

Here's a diagram illustrating these components:

What MCP Servers Can Expose

The MCP server exposes several types of capabilities to the AI assistant:

Tools: Allow the AI to trigger specific actions within the connected application. For example, an Argo CD MCP server might expose tools like "sync application," "rollback application," or "run resource action."

Resources: MCP Servers can expose data and content that clients can read and use as context for LLM interactions. For example, an Argo CD MCP server might expose resources like application lists, deployment statuses, and logs.

Prompts: MCP servers can provide prompt templates to guide the LLM in formulating effective natural language queries and interactions. These prompts help steer the AI towards understanding the available capabilities and how to request them.

How It All Connects

Communication within the MCP framework uses Standard Input/Output (STDIO) or HTTP + Server-Sent Events (SSE) as the transport mechanism. The messages themselves are formatted using JSON-RPC 2.0.

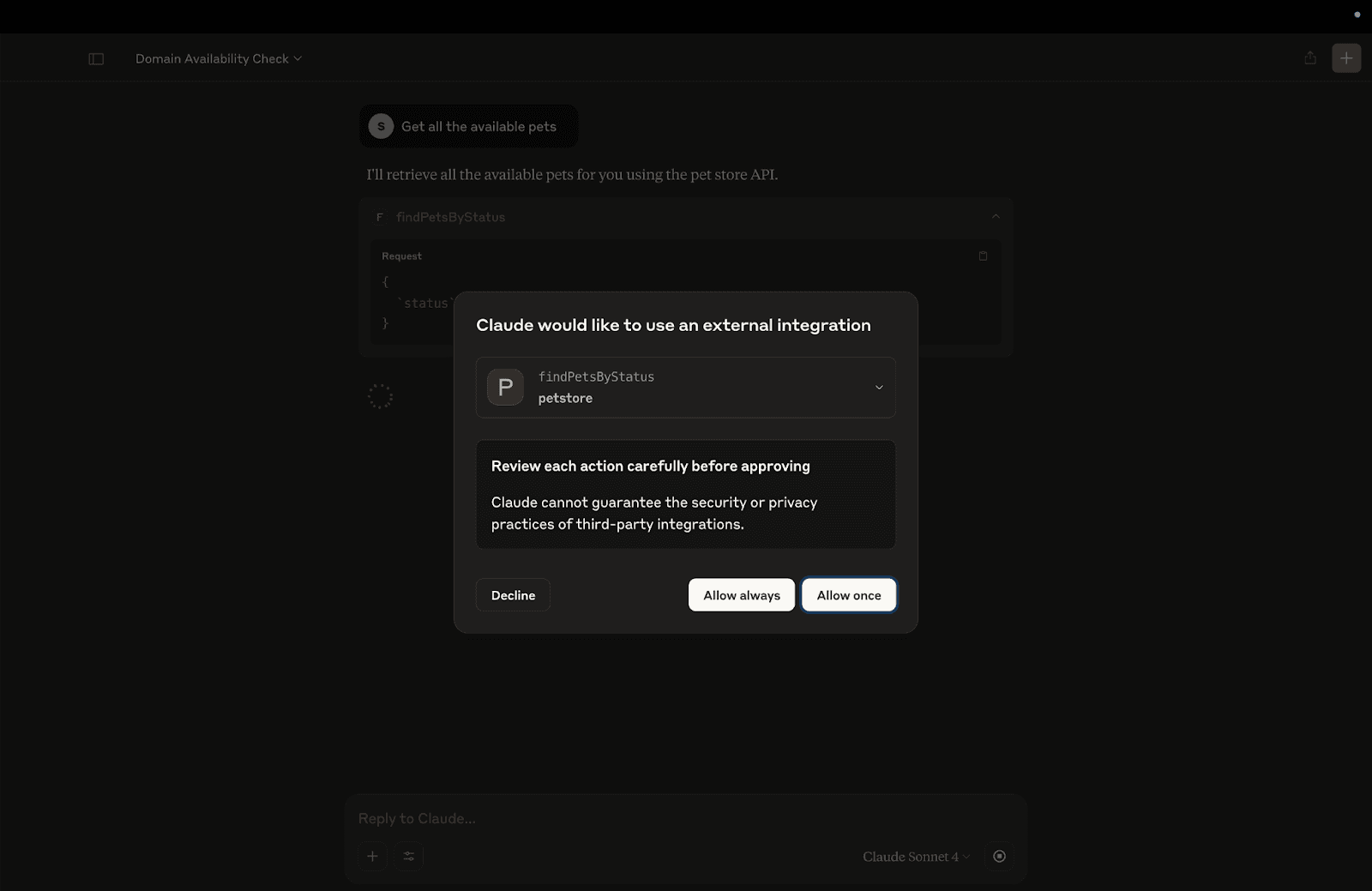

An essential aspect of MCP is the focus on security and user control. Interactions always involve a "human-in-the-loop" permissioning model, where the user explicitly grants or denies the AI assistant's request to perform an action or access data, ensuring clarity and preventing unintended operations.

Here is an example image, where the Claude Desktop client explicitly asks permission to use the findPetByStatus tool.

What Makes the Argo CD MCP Server Unique? An MCP Server for Platform Engineers

First-of-Its-Kind DevOps Integration

Currently, most MCP server implementations connect to general tools like chat applications, calendars, or SaaS services like GitHub/Jira. The Argo CD MCP Server is among the first to connect an AI assistant to a core infrastructure platform.

It allows GitOps workflows, usually managed via CLIs or web UIs, to be managed directly from a developer's code editor using natural language.

Supported Features in the Argo CD MCP Server

The Argo CD MCP Server helps developers to perform a range of GitOps tasks directly from their AI-assisted IDE:

Application Management: List, create, update, and delete Argo CD applications.

Synchronization: Sync applications to their desired state or check their current synchronization status.

Resource Inspection: Retrieve detailed resource trees, access workload logs for troubleshooting, and run predefined actions on specific resources.

These features are tailored for developers who spend most of their time in their IDE and want to reduce the friction associated with switching contexts to manage their GitOps workflows.

Refer to the project README to learn more about the supported tools and resources.

Common Developer Workflows

Once set up, developers can use natural language for various tasks:

Status Checks: "What’s the sync status for the frontend application in the staging environment?" directly from the IDE.

Troubleshooting: "Show me the logs for the payment-service pod that is erroring" without switching to a terminal or dashboard.

Deployments: "Sync the api-gateway application in production".

Refer to the project README to learn more about the supported tools and resources.

Getting Started With the Argo CD MCP Server

Setting up the Argo CD MCP server allows developers to integrate their AI assistant with their Argo CD instances. This section will configure the Argo CD MCP server in the Claude Desktop Client.

What You’ll Need

To get started, you'll require the following:

Node.js: Version 18 or later.

Argo CD Instance: An accessible Argo CD instance with API access enabled.

MCP-Compatible AI Assistant: An AI tool that supports MCP, such as Claude Desktop, Cursor, or Visual Studio Code (VS Code).

For these instructions, we’ll be using Claude Desktop and the basic ArgoCD quickstart application. They should be easily adaptable to your setup!

Instructions

Set up ArgoCD and Deploy an Application

Refer to the official quick start to set up and deploy a sample application. You should have a guestbook application installed on your cluster at the end of this quick start.

Configure Claude Desktop

To make Claude aware of available MCP servers, we need to configure the claude_desktop_config.json file. This file specifies how the AI assistant should connect to the MCP server.

Create a configuration file at the locations shown below.

Open up the configuration file in any text editor. Replace the file contents with this:

You must also configure environment variables to provide the Argo CD server address and authentication token.

For the Argo CD server address, you can use https://localhost:8080 if you are using kubectl port-forward to access it locally. Otherwise, replace it with the appropriate URL.

To obtain the token, execute the below curl request

Note: Once configured, quit the Claude Desktop application and start it again so that it loads the new configuration.

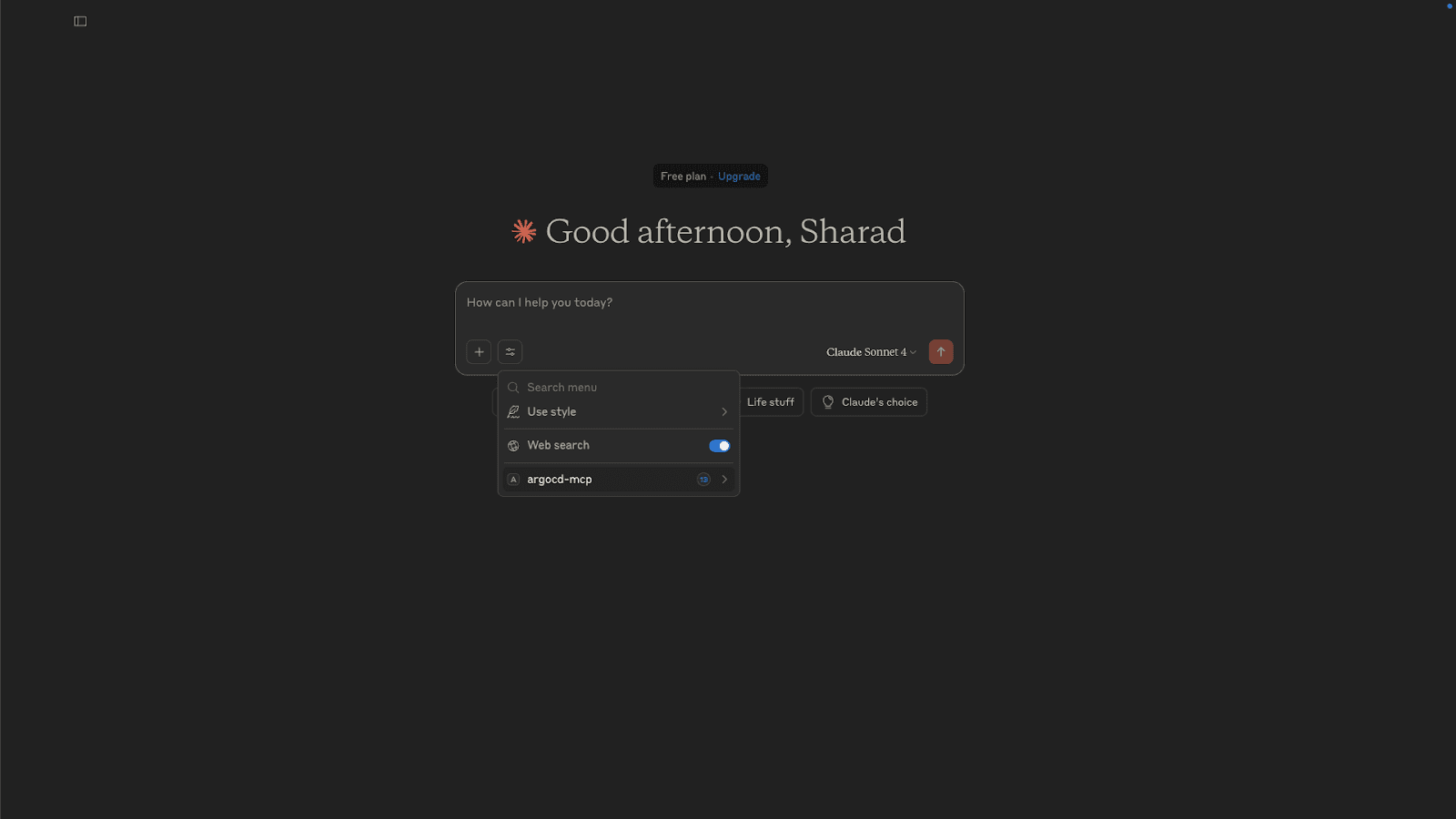

Testing the Configuration

Now, open the Claude Desktop and ensure you can see the argocd-mcp server as shown in the image below.

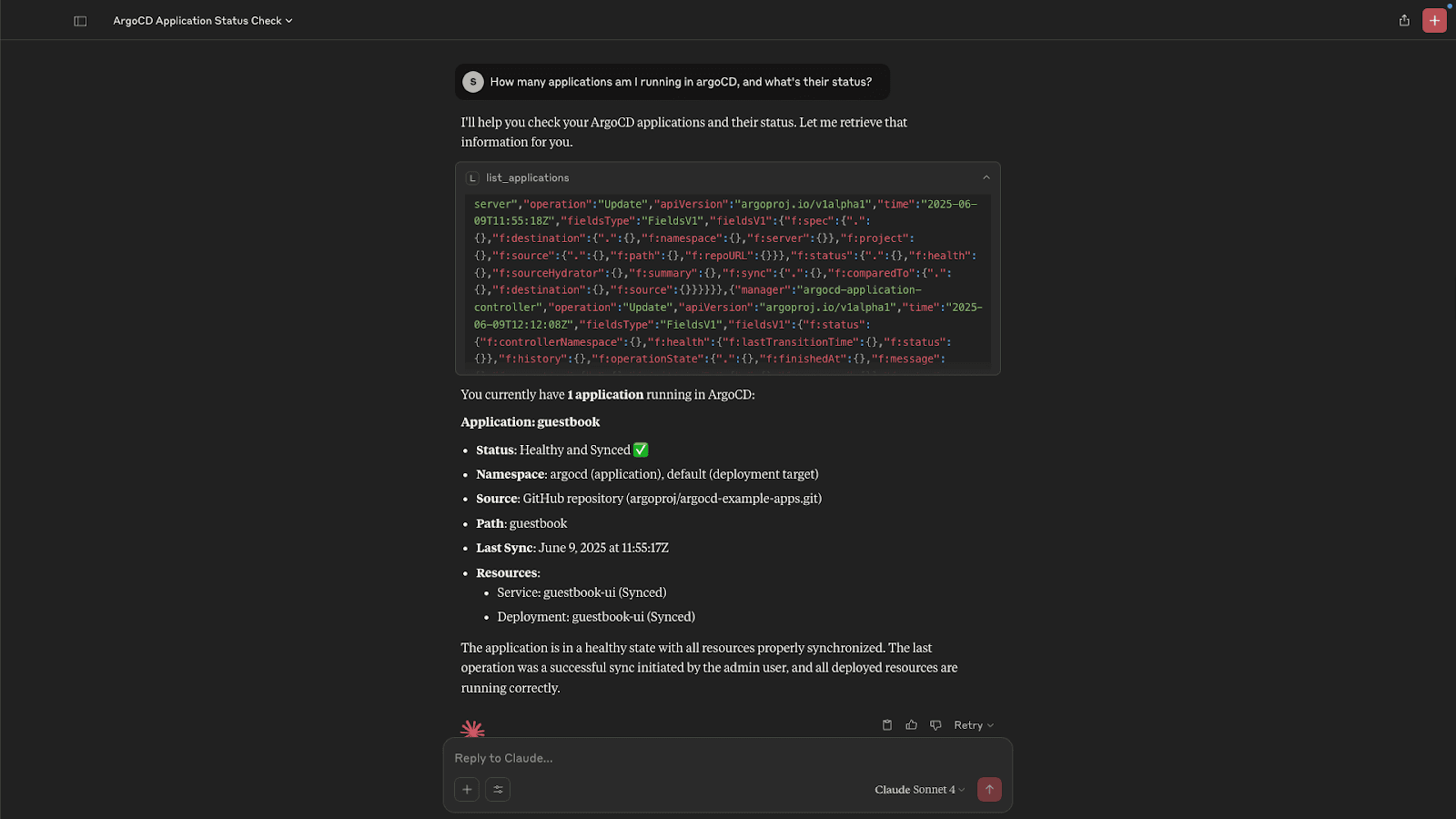

In the previous step, we already deployed a guestbook application. Now ask this question in the Claude Desktop How many applications am I running in argoCD, and what's their status?

Claude Desktop will ask your permission to connect to the argocd-mcp server, allow the connection request. You should see an output as shown below.

Conclusion

The Model Context Protocol (MCP) is more than just a technical spec; it’s a shift in how developers interact with infrastructure. By bridging AI assistants and tools like Argo CD, MCP makes it possible to manage deployments, troubleshoot issues, and run GitOps workflows using natural language, right from your IDE.

The Argo CD MCP Server is one of the first DevOps-focused implementations of this emerging standard, and it's open source, extensible, and ready for experimentation.

Try the Argo CD MCP Server

The Argo CD MCP Server, created by the original creators of the Argo Project, is now available on GitHub. Start exploring how AI assistants like Claude, Cursor, and VS Code can help you manage GitOps workflows right from your IDE.

GitHub Repo: Try it out, star the project, and contribute

Join the Akuity Discord: Ask questions, share feedback, and connect with the team

MCP Protocol Overview: Learn more about how MCP standardizes AI-infra interaction

Frequently Asked Questions about MCP Servers

What is an MCP server?

An MCP server is a bridge between AI assistants and applications, like Argo CD, that exposes tools and resources via the Model Context Protocol (MCP). It allows developers to perform actions and access data directly from their AI-assisted IDE using natural language, without needing custom integrations or CLI commands.

How does the Argo CD MCP Server work?

It exposes tools and resources via the MCP protocol, allowing AI assistants to interact with Argo CD using secure, permissioned commands (no CLI needed).

Which AI tools support MCP?

Currently, Claude Desktop offers the most complete and mature support for the Model Context Protocol (MCP), allowing users to connect the AI directly to local files, databases, and external tools through MCP servers. Cursor also supports MCP, enabling the editor to access structured external context and tooling during development workflows. Visual Studio Code can use MCP through community or experimental extensions, although MCP is not yet a native VS Code feature. Beyond these tools, any custom AI application or agent runtime can support MCP by implementing the open MCP specification, either as a client or a server. While adoption is growing quickly, many popular AI tools and agent frameworks have not yet added first-class MCP support.

Is the Argo CD MCP Server production-ready?

It’s open source and actively maintained, but currently best suited for experimentation, internal tools, and forward-looking teams exploring AI integrations.

Do I need to know MCP to use the server?

No. The setup guides handle the heavy lifting, and once connected, you can interact with Argo CD using plain English through your AI assistant.

What is the difference between MCP and API?

MCP vs API: MCP (Model Context Protocol) is a standardized protocol designed specifically to connect AI assistants with applications and data sources, enabling context-aware, permissioned interactions. Unlike a traditional API, which defines endpoints and requests for software-to-software communication, MCP provides a uniform framework for AI agents to access multiple tools, trigger actions, and retrieve data across different platforms without building custom integrations for each one.

What is the difference between MCP and MCP server?

The MCP (Model Context Protocol) is the standard or protocol that defines how AI assistants communicate with applications and data sources in a consistent, secure, and context-aware way.

The MCP server, on the other hand, is an implementation of that protocol—a running service that exposes specific tools, resources, and actions from an application (like Argo CD) so that AI assistants can interact with it using MCP.

In short: MCP is the “language” or “rules,” and the MCP server is the “speaker” that follows those rules to enable real interactions.

What MCP servers exist?

Argo CD MCP Server (by Akuity) – Connects AI assistants to Argo CD for GitOps workflows, enabling tasks like syncing applications, checking deployment status, and inspecting resources directly from an IDE.

General SaaS MCP Servers – Early implementations for common tools like Gmail, Slack, Jira, and GitHub, allowing AI assistants to perform actions or fetch data from these services.

Custom MCP Servers – Organizations can build their own MCP servers for internal applications, databases, or infrastructure platforms to standardize AI interactions across tools.

Essentially, any system can have an MCP server if it implements the MCP protocol to expose tools and resources to AI assistants.