Which Argo CD Architecture is Best? Comparing Single, Per Cluster, and Hybrid Models

Nicholas Morey

Using Argo CD to implement GitOps for Kubernetes appears simple. However, like any system, the ability to scale GitOps practices is highly dependent on the architecture you choose. This blog post will explore the three most common architectures when implementing Argo CD: single instance, per cluster instances, and a compromise between the two. I will break down the benefits and drawbacks of each one to help you decide what is appropriate for your situation.

I've updated this post with my learnings from the past year. Argo CD and the Akuity Platform have seen a number of exciting changes and updates that provide new considerations for what architecture to choose.

Comparing the 3 Most Common ArgoCD Architectures

Choosing the right Argo CD architecture is essential for ensuring scalability, security, and operational efficiency. The architecture you select impacts how Argo CD manages deployments, how teams interact with it, and how well it integrates with your existing Kubernetes infrastructure.

Below, I’ll dive into each architecture in detail, exploring their benefits, challenges, and ideal use cases.

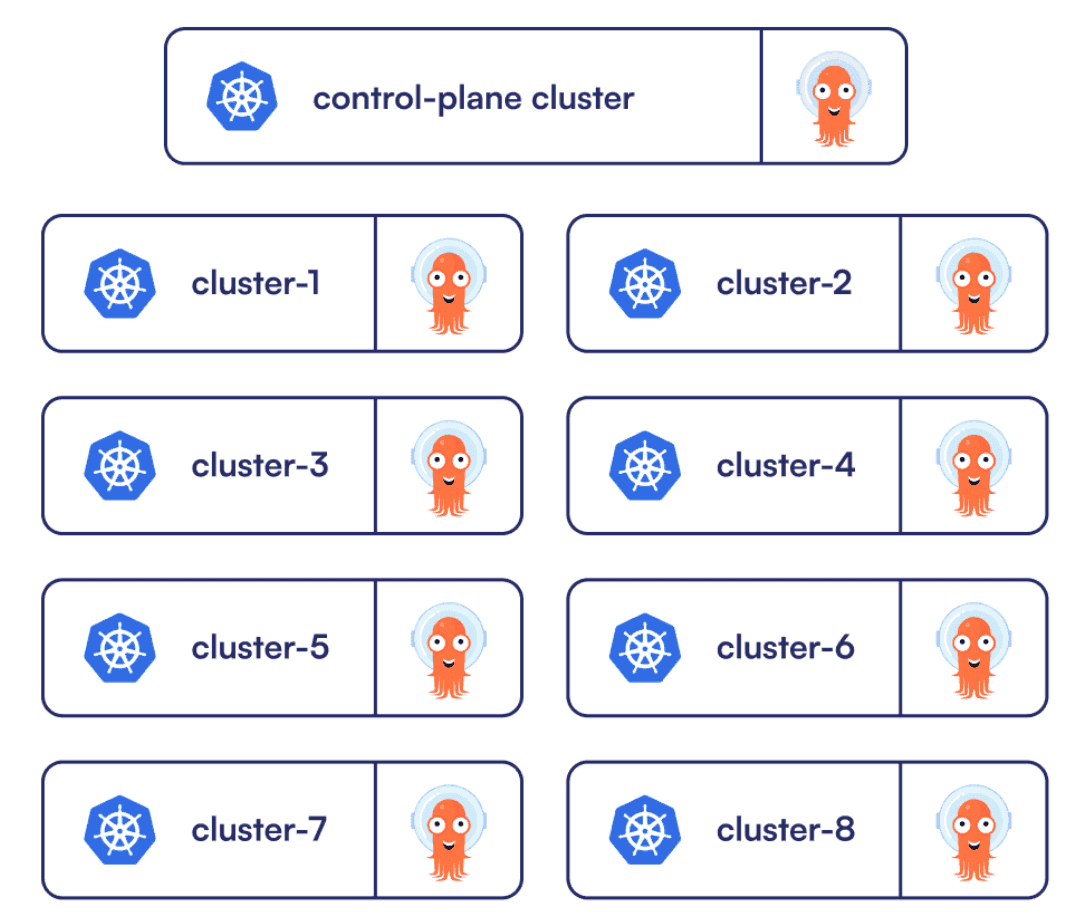

Single ArgoCD Instance (Management Cluster Model)

The single control plane approach has one instance of Argo CD managing all of the clusters. This is a popular approach as it allows users to have a single view of their applications, providing a great developer experience.

Architecture Advantages

Single view for deployment activity across all clusters.

Single control plane, simplifying the installation and maintenance.

Single server for easy API/CLI integration.

Great integration with the ApplicationSet cluster generator.

Architecture Disadvantages

Scaling requires tuning of the individual components.

Single point of failure for deployments.

Admin credentials for all clusters in one place.

Requires a separate "management" cluster.

Significant network traffic between Argo CD and clusters.

How This Architecture Works

In this architecture, there's only one server URL. This simplifies logging into the argocd CLI and setting up API integrations. It also improves the operator experience with just one location for managing the configuration (e.g. repository credentials, SSO, local users, API keys, Argo CD CRDs, and RBAC policies).

The operator experience is improved even further when combined with ApplicationSets and the `cluster` generator to create an Application for each cluster registered to Argo CD. This pattern makes it incredibly easy to manage "cluster add-ons" (a standard set of services in each cluster) at scale. I gave a workshop on this pattern at ArgoCon, which you can try out for yourself.

If your organization is the type to delegate access based on the environment and you are concerned about having all of your applications under one instance, don't worry, you create the same boundaries using RBAC policies and AppProjects. The project defines what can be deployed where and the RBAC policy will define who can access the project.

Scaling, Networking, and Security Considerations

With a single control plane, you have a single point of failure. Your production deployments could be affected by the actions of other environments. Suppose the staging cluster became unresponsive, causing timeouts on the kube-apiserver. This could lead to a high load on the application controller, affecting Argo CD performance for the deployment of production Applications.

This architecture also requires you to stand up and maintain a dedicated management cluster that will host the Argo CD control plane. The management cluster must have direct access to the Kubernetes API server of all your other clusters. Depending on the location of the management cluster, this could involve publicly exposing them, which may raise security concerns. Or if every cluster is a separate VPC, this will require complex networking configuration.

The admin credentials (i.e. the kubeconfig) for all of the clusters are stored centrally on this single Argo CD instance (as secrets in the cluster). If a threat actor manages to compromise the management cluster or Argo CD instance, this will grant them widespread access.

It's also worth mentioning that the application controller must perform Kubernetes watches to gather data from each cluster. You may incur significant costs due to network traffic if the management cluster is in a different region than the other clusters.

Running a single instance means only one controller is handling all this load. Scaling will require tuning the individual Argo CD components (i.e. repo-server, application controller, api-server) as the number of clusters, applications, repositories, and managed resources increases. Managing Application controller shards is an unpleasant experience that requires manually matching shard size to clusters.

ArgoCD Per Cluster (Dedicated Instance Model)

Another typical architecture is to deploy Argo CD to each cluster. It's most common in organizations where each environment has one Kubernetes cluster. Effectively, you end up with an Argo CD instance for each environment which can simplify security and control.

Architecture Advantages

Distributes load per cluster.

No direct external access is required.

Eliminates the Argo CD traffic leaving the cluster.

An outage in one cluster won't affect other clusters.

Credentials are scoped per cluster.

Architecture Disadvantages

Requires maintaining multiple instances and duplicating configuration.

At a particular scale, each instance could still require tuning.

API/CLI integrations need to specify which instance.

Limited integration with the ApplicationSet `cluster` generator.

How the Per-Cluster Model Improves Security and Isolation

This method is more secure since Argo CD runs within the cluster, meaning you don't need to expose the Kubernetes API server of the cluster to the external control plane. Beyond that, there's no central instance containing admin credentials for all the clusters. The security domain is limited to a single Argo CD instance. Any other credentials that Argo CD requires (e.g. repo credentials, notifications API keys) can be scoped to the cluster they are in instead of shared among them.

There's no longer a significant amount of network traffic leaving the cluster to the application controller in the management cluster. This could greatly reduce cloud costs for network traffic. However, you may incur additional costs due to the additional compute resources required to run every Argo CD component in each cluster.

Scaling is improved because each Argo CD instance only handles a single cluster, and the load is effectively distributed among the environments. However, when an individual cluster reaches a certain scale (e.g. number of applications and repositories), you may still need to tune the individual Argo CD components.

In the same way that the security domain is limited to a single Argo CD instance, the blast radius for outages is also contained. If one cluster is experiencing a significant load to the point that it could prevent application deployments, it will not go on to affect other clusters.

Challenges in Developer and Operator Experience

There are of course downsides to this approach. This architecture negatively affects the developer experience. There's the additional cognitive load of knowing which control plane to point to when using the Argo CD CLI or web interface. You can minimize this with a solid naming strategy and consistency in the server URLs (i.e. each cluster has its FQDN that matches its name, with Argo CD as a subdomain under that).

It's a painful experience for operators to manage many Argo CD instances. There's a different location to log in to for each cluster, which requires maintenance of RBAC policies and API keys for each one. For that matter, any configuration of Argo CD will need to be duplicated for each cluster (e.g. SSO, repository credentials). Be careful of drift, consistency is important. Lower environments should be as production-like as possible to represent the "real" production deployments.

The burden for operators is especially heavy since you can no longer take advantage of the ApplicationSet cluster generator to automatically create Applications for clusters registered to Argo CD. Instead, you will have to rely on the git generator and a folder structure in your GitOps repository to automatically create the Applications. Which results in more duplicated configuration and possibly inconsistencies between environments.

Hybrid (Instance Per Logical Group)

This architecture is a compromise between the previous two, which balances running one Argo CD instance per logical group of clusters. The grouping could be per team (see Conway's Law), region, or environment. Whatever makes sense for your situation. You probably already have a way of grouping applications internally; this is a great place to start.

Architecture Advantages

Distributes load per group.

An outage in one cluster won't affect other groups.

Credentials are scoped per group.

Single view for deployment activity across a group.

Configuration duplication is reduced.

Easy to understand location for API/CLI integration.

Integration with the ApplicationSet cluster generator for the group.

Architecture Disadvantages

Requires maintaining multiple instances.

At a certain scale, each instance could still require tuning.

API/CLI integrations need to specify the right instance.

Requires a separate "management" cluster.

Duplicate configuration for ApplicationSets with the cluster generator.

How This Architecture Works

This architecture is beneficial when running multiple clusters per environment. It reduces the pain of maintaining too many instances of Argo CD. The RBAC, AppProject, and other configurations will likely be similar for all of the clusters managed by an instance. So the configuration duplication is reduced compared to running an instance for each cluster.

The groups partition the load, which distributes the burden on the application controller, repo server, and API server. It also allows you to limit the blast radius of what can be impacted by Argo, which is a great approach for security and reliability. The grouping isn't a perfect solution, though, since depending on the size of the clusters, it may still require tuning the individual Argo CD components.

Within each group of clusters, you can take advantage of the ApplicationSet cluster generator. Which will help keep the clusters in that group consistent. But you will require an ApplicationSet for each Argo CD instance. Again, it comprises less duplication but still some.

Developer Experience and Management Considerations

The developer experience is improved compared to the instance per cluster architecture. Following an understood convention for the grouping will reduce the cognitive burden of knowing where to point their CLI and API Integrations for Argo CD.

This method still requires a management cluster to host the Argo CD instances. Fortunately, by installing the Argo CD instances as namespace-scoped, you can get away with using one cluster for all of them (of course, this introduces a blast radius problem).

The Akuity Platform - An Alternative Approach

The Akuity Platform provides the benefits of the instance per cluster model and the single instance architecture without compromise. The agent-based architecture provided by the Akuity Platform enables Argo CD to scale to large enterprise use cases encompassing thousands of clusters and applications using a single control plane (seriously, we tested with 50,000 Applications and 1,000 clusters).

Key Advantages of the Akuity Platform

Load is distributed per cluster.

Single-pane of glass for management.

No "management" cluster to maintain.

Does not require external access to clusters.

No single point of failure.

Application Controller is sized correctly for the cluster.

Delegate components to specific clusters.

Built-in disaster recovery features.

API and CLI for automation.

AI Assistant for first-line support.

Open-source Argo CD components - with the familiar interface.

Full support for the ApplicationSet clustergenerator.

Potential Trade-Offs and Considerations

Paying for Argo CD instead of the cloud resources.

Less control over the platform hosting Argo CD. Self-hosted Akuity Platform is available, giving you full control of the platform.

Limited declarative management of Argo CD settings. This has been solved with the introduction of Declarative Management for the Akuity Platform and the launch of the Akuity Terraform provider.

How the Akuity Platform Works (Agent-Based Architecture)

The agent runs inside the cluster and has outbound access back to the control plane. This architecture significantly reduces network traffic between the control plane and the cluster. The security concerns are gone since it does not require direct cluster access or admin credentials. The agent is running with a service account in your managed cluster, talking directly to the API server over the cluster network. It even enables an external Argo CD instance to connect to clusters where exposing them is challenging, like a cluster on your laptop.

The agent architecture enables the Akuity Platform to delegate repo-server, applicationset-controller, and image-updater functions to a specific cluster. This flexibility allows for easy access to a private internal Git server, or secure local access to special cluster fit for these components.

Simplified ArgoCD Management and Centralized Visibility

The Akuity Platform simplifies the experience of operating Argo CD. There's no longer a need for a dedicated management cluster to host it. The Akuity Platform will host the Argo CD instance and the CRDs. The configurations can be [managed declaratively using the akuity CLI. You can also use the wizards in the dashboard to craft configurations typically represented in complex YAML files, like notification services. The automatic snapshotting and disaster recovery features of the Akuity Platform eliminate the "single point of failure" concern.

Thanks to the scalability of a single Argo CD instance on the Akuity Platform, it's possible to use a single instance for everything. This creates the perfect architecture to take advantage of the ApplicationSet clustergenerator. Every agent deployed to your managed clusters is registered in Argo CD, making it available to the generator. By simply deploying an agent to your cluster, Argo CD can bootstrap it with any Applications using the ApplicationSet.

It provides the same visibility benefits as the single Argo CD instance architecture with a central location to view all of the organization's applications. The platform goes beyond the open-source offering by adding a dashboard for each instance that provides metrics on application health and sync histories. It adds an audit log of all the activity across the Argo CD instances in your organization, making compliance reporting significantly more manageable.

AI-Powered Assistance and Automation

Typically a platform team is serving many more developers than their team size, and ad-hoc questions about why an Application is unhealthy or out-of-sync can take up a lot of the platform team's time. A unique offering of the Akuity Platform is the integration of the AI Assistant. It is available as an extension of the open-source UI for Argo CD instances on the Akuity Platform. The assistant will look at the logs and events for the Application and answer questions in plain English using that context. The developers can now direct their questions to the AI Assistant as the first line of support, saving the platform team time.

Addressing Cost and Management Concerns of a SaaS ArgoCD Solution

No solution is really without compromise. When using a SaaS product, you opt to give up some control over the platform. At the same time, you can take advantage of the experience of the creators of the Argo Project (and founders of Akuity) to use Argo CD without maintaining the underlying infrastructure.

Of course, there's also the cost. It may seem like a big difference when comparing the cloud resource cost directly to the cost of an Argo CD SaaS offering. But considering the engineering hours spent maintaining, tuning, and securing the open-source offering, the difference may not be as significant as you think. Fortunately, any time spent learning to use Argo CD will not be lost. The Akuity Platform provides the same familiar interface (and APIs).

Addressing Cost and Management Concerns of a SaaS ArgoCD Solution

Open-source installations of Argo CD will typically be set up to manage themselves with an Application. Like any other Application, it will define how to deploy Argo CD into the cluster with all its configurations from a Git repo. A SaaS offering may limit how you can manage these settings, preventing Argo CD from managing itself. You may need to use the platform's dashboard, an API, or an infrastructure-as-code (IaC) tool. Akuity has solved this challenge with the introduction of Declarative Management for the Akuity Platform and the launch of the Akuity Terraform provider.

Argo CD Managing Argo CD (Self-Managed Argo CD Architecture)

Advantages

Provide application teams with greater control.

Separation of concern between infrastructure and applications.

Great for edge clusters that can run in "core" mode.

Disadvantages

Management overhead and duplicated configuration.

Extra resource usage in the clusters.

Complexity of the implementation.

Limited visibility from central instance.

A final note on a special architecture, that is both per-cluster and central to all clusters.

The entire Argo CD installation and configuration is represented by Kubernetes resources (e.g. Deployments, ConfigMaps, CRDs). Argo CD is designed to manage the deployment of Kubernetes resources. Therefore, it's possible to have Argo CD managing itself and other Argo CD instances.

How Self-Managed Argo CD Works

In this architecture, there is a central Argo CD instance on a management cluster that is operated by the platform team. This instance is used to bootstrap the Kubernetes clusters with a separate Argo CD instance used for the applications.

Since the Argo CD instances running the application clusters have a limited blast radius, it's common for application teams with sufficient Kubernetes knowledge to own Argo CD and be responsible for it's configuration. This type of implementation requires many of the required configurations (e.g. the SSO and repository credentials) to be provided as a service by the platform team, and even a "DevOps Engineer" integrated into the application team.

Using Core Mode for Edge Clusters

Another ideal use case for this architecture is edge clusters, where the child Argo CD instance is running in "core" mode, which is effectively headless Argo CD. With this installation mode, you will have a fully functional GitOps engine capable of retrieving the desired state from the source and applying it to the edge cluster. But without the web UI, API, Argo CD RBAC (Kubernetes RBAC only), and SSO. The installation mode saves on resources, which can be essentially beneficial for edge clusters running on limited hardware.

The central Argo CD instance is essentially deploying a GitOps engine to each cluster to keep the state in sync, without all the nice-to-haves for end-users. Compared to the pure central Argo CD architecture, the central instance only requires connectivity to the edge cluster during bootstrapping and for upgrades. Otherwise, the Argo CD instance in core mode will connect out from the edge cluster to retrieve the desired state.

Challenges of Self-Managed Argo CD

The disadvantages to this architecture are mainly complexity and limited visibility. The central Argo CD instance will only have visibility into the Argo CD resources in the application (or edge) clusters, not the resources managed by the Argo CD instances in those clusters. This is another advantage to the agent-based model of the Akuity Platform, where it's effectively a "core" install in each cluster but with the added benefits of complete visibility from the central Argo CD instance.

The complexity in this architecture should not be taken lightly. There's no longer a single reconciliation loop but, instead, a central reconciliation and one for each cluster. The same goes for the configuration of Argo CD and the Applications that define how to deploy resources to the cluster. Without sufficient observability tooling in place, the layers of Argo CD could become a burden on the platform and application teams.

Final Thoughts: Making ArgoCD Work for Your Infrastructure

The number of Argo CD instances you will need depends on several factors, including the size and complexity of your environment, the number of users accessing the system, and the workloads the instances will handle. You can determine the optimal architecture and appropriate compromises by carefully considering these factors and your organization's situation.

As a rule of thumb, I'd recommend using a single Argo CD instance if you are new to Kubernetes and have a small number of clusters (=< 3) and applications (=< 100). If your organization implements a cluster per environment (e.g. dev, stage, prod), it typically makes sense to use one Argo CD instance per cluster.

Suppose you already have Argo CD in your environment and are hitting limitations on scaling. In that case, it may be time to transition to an instance per logical group or consider the Akuity Platform, which re-architects Argo CD to be enterprise-ready and scalable by default.

Akuity aims to truly enhance the experience of using Argo. Don't just take our word for it, our users love the Akuity Platform so much that they have given talks about it at ArgoCon! MLB switched from open-source Argo CD to the Akuity Platform to address their challenges with sharding the application-controller, UI slowness with 1,000s of Applications, caching, Git traffic, and monitoring. They took advantage of working directly with Akuity, the creators of the Argo Project.

If you want insights on where to start with Akuity or Argo CD schedule a technical demo with our team or review the “Getting started” guide.

Additional Resources

Enjoyed this blog post? To get the most out of your Argo CD learning experience, check out these resources:

[Blog Post]: Argo CD Architecture Redesigned

[Video]: Introduction to Argo CD Using the Akuity Platform

[Video] Creating a Fully Managed Kubernetes GitOps Platform with Argo CD

Frequently Asked Questions on Argo CD Architecture

What is argocd architecture?

ArgoCD architecture refers to how ArgoCD instances are structured and deployed to manage Kubernetes clusters using GitOps. It defines how control planes, application controllers, and API servers are organized to handle deployments, scale workloads, and provide visibility. Common architectures include a single instance managing all clusters, per-cluster instances for isolation and security, and hybrid models grouping clusters logically to balance visibility and load. The architecture choice impacts scalability, security, operational overhead, and developer experience.

How does Akuity's Platform provide architecture enhancements?

Akuity’s Platform enhances ArgoCD by combining the benefits of single-instance and per-cluster architectures with an agent-based design. It distributes load per cluster while providing a central “single-pane” view for management, eliminates the need for a dedicated management cluster, and reduces network traffic and security risks. The platform adds features like AI-assisted support, disaster recovery, dashboard metrics, and full ApplicationSet integration. These enhancements simplify operations, improve scalability, and make managing large, multi-cluster deployments more efficient.

What is the best ArgoCD architecture for managing multiple clusters?

The best architecture depends on scale and security needs: single-instance offers central visibility, per-cluster improves isolation, and hybrid balances visibility with distributed load. Small deployments typically use a single instance, while large environments benefit from hybrid or agent-based solutions like the Akuity Platform.

How does ArgoCD handle deployments across multiple Kubernetes clusters?

ArgoCD manages multiple clusters by connecting each cluster to an ArgoCD instance, rendering applications from Git repositories, and applying manifests via GitOps. Depending on the architecture, a single control plane or multiple instances distribute the workload and maintain synchronization. Akuity provides a centralized system for easy multi-environment management.